* [PATCH v2 1/4] workflows: Add vLLM workflow for LLM inference and production deployment

2025-10-04 16:38 [PATCH v2 0/4] vLLM and the vLLM production stack Luis Chamberlain

@ 2025-10-04 16:38 ` Luis Chamberlain

2025-10-04 16:38 ` [PATCH v2 2/4] vllm: Add DECLARE_HOSTS support for bare metal and existing infrastructure Luis Chamberlain

` (4 subsequent siblings)

5 siblings, 0 replies; 13+ messages in thread

From: Luis Chamberlain @ 2025-10-04 16:38 UTC (permalink / raw)

To: Chuck Lever, Daniel Gomez, kdevops

Cc: Devasena Inupakutika, DongjooSeo, Joel Fernandes,

Luis Chamberlain

Add support for deploying and testing vLLM inference engine and the vLLM

Production Stack. The workflow enables automated testing of both vLLM

as a single-node inference server and the production stack's

cluster-wide orchestration capabilities including routing, scaling,

and distributed caching. We start off with CPU support for both.

For the production stack two replicas are requested so two engines,

each one requiring 16 GiB memory. Given other requirements we ask for

at least 64 GiB RAM for the production stack vllm CPU test.

To get the production stack up and running you just use:

make defconfig-vllm-production-stack-cpu KDEVOPS_HOSTS_PREFIX="demo"

make

make bringup

make vllm AV=2

At this point you end up with two replicas serving through the

vLLM production stack router.

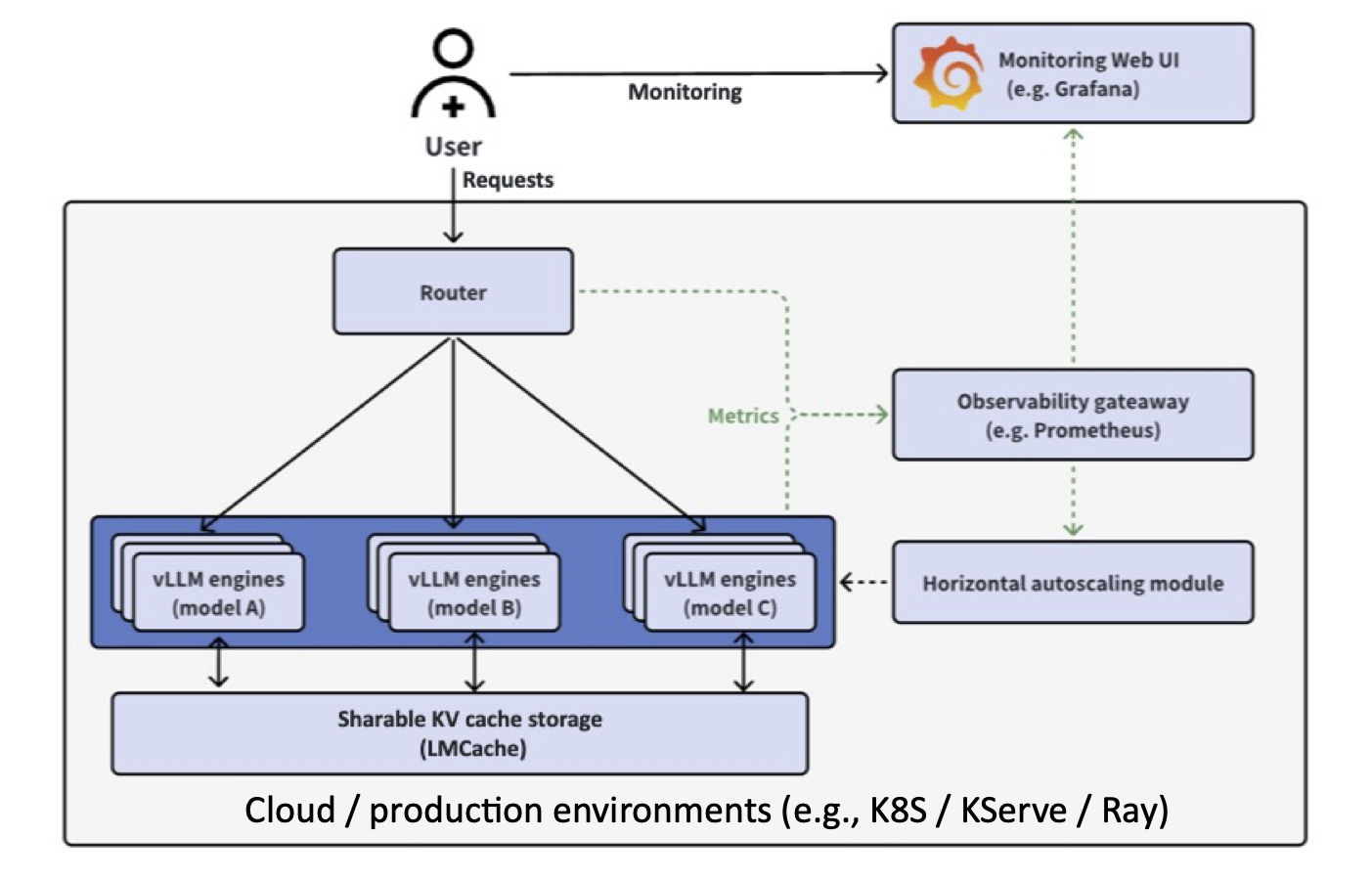

vLLM is a high-performance inference engine for large language models,

optimized for throughput and memory efficiency through PagedAttention

and continuous batching. The vLLM Production Stack builds on top of this

engine to provide cluster-wide serving with intelligent request routing,

distributed KV cache sharing via LMCache, unified observability, and

autoscaling across multiple model replicas.

The implementation supports three deployment methods: simple Docker

containers for development, Kubernetes with the official Production

Stack Helm chart for cluster deployments

(https://github.com/vllm-project/production-stack), and bare metal with

systemd for direct hardware access. Each method shares common

configuration through Kconfig while maintaining deployment-specific

optimizations.

Testing can be performed with either CPU-only or GPU-accelerated

inference. CPU testing uses openeuler/vllm-cpu images to validate the

vLLM API and the production stack's orchestration layer without

requiring GPU hardware, making it suitable for CI/CD pipelines and

development workflows. This enables testing of the router's routing

algorithms (round-robin, session affinity, prefix-aware), service

discovery, load balancing, and API compatibility. GPU testing validates

full production scenarios including LMCache distributed cache sharing,

tensor parallelism, and autoscaling behavior.

The workflow integrates Docker registry mirror support with automatic

detection via 9P mounts. When /mirror/docker is available, the system

automatically configures Docker daemon registry-mirrors for transparent

pull-through caching, reducing deployment time without requiring manual

configuration. The detection uses the libvirt gateway IP to ensure

proper routing from containers and minikube pods.

Image configuration follows Docker's native registry-mirrors pattern

rather than rewriting image names. This preserves the original

repository paths like 'openeuler/vllm-cpu:latest' and

'ghcr.io/vllm-project/production-stack/router:latest' while still

benefiting from mirror caching when available.

Status monitoring is provided through:

make vllm-status

make vllm-status-simplified

which parse deployment state and present it with context-aware guidance

about next steps. The vllm-quick-test target provides rapid smoke

testing across all configured nodes with timing measurements and

proper exit codes for CI integration.

To test an LLM query:

make vllm-quick-test

We provide basic documentation to help clarify the distinction between

vLLM (the inference engine) and the Production Stack (the orchestration

layer). For more details refer to the official release announcement at:

https://blog.lmcache.ai/2025-01-21-stack-release/

The long term plan is to scale with mocked engines, and then also

real GPUs support both bare metal and on the cloud, leveraging

kdevops's cloud agnostic power for any workflow.

Here's an example quick test:

mcgrof@beefy-server /xfs1/mcgrof/vllm/kdevops (git::vllm-v2)$ make vllm-quick-test

========================================

vLLM Quick Test

========================================

Prompt: "kdevops is"

Max tokens: 30

Nodes to test: 1

Testing Baseline node: lpc-vllm

----------------------------------------

Node IP: 192.168.122.170

Starting kubectl port-forward...

Sending request: "kdevops is"

✓ Success!

Duration: 15.747292458s

Full response: "kdevops iseasily a higher level doctor than your list.

really it depends on as on what doc is what 15 less ifmay its just personal preferences."

Full JSON response:

{

"id": "cmpl-2f031a35c5364d3aaf2b9f0007d46ae5",

"object": "text_completion",

"created": 1759424719,

"model": "facebook/opt-125m",

"choices": [

{

"index": 0,

"text": " easily a higher level doctor than your list.\nreally it depends on as on what doc is what 15 less ifmay its just personal preferences.\n",

"logprobs": null,

"finish_reason": "length",

"stop_reason": null,

"prompt_logprobs": null

}

],

"usage": {

"prompt_tokens": 5,

"total_tokens": 35,

"completion_tokens": 30,

"prompt_tokens_details": null

},

"kv_transfer_params": null

}

========================================

All tests passed!

========================================

Then for a synthetic benchmark:

make vllm-benchmark

You should end up with results in workflows/vllm/results/html/

I have put demo results of a synthetic run and also a real workload

on a virtual 64 vcpus 64 GiB DRAM here:

https://github.com/mcgrof/demo-vllm-benchmark

Generated-by: Claude AI

Signed-off-by: Luis Chamberlain <mcgrof@kernel.org>

---

.gitignore | 1 +

PROMPTS.md | 31 +

README.md | 26 +-

defconfigs/vllm | 40 +

defconfigs/vllm-production-stack-cpu | 45 ++

defconfigs/vllm-quick-test | 42 ++

kconfigs/Kconfig.libvirt | 3 +

kconfigs/workflows/Kconfig | 28 +

playbooks/roles/gen_hosts/defaults/main.yml | 1 +

playbooks/roles/gen_hosts/tasks/main.yml | 15 +

.../gen_hosts/templates/workflows/vllm.j2 | 65 ++

playbooks/roles/gen_nodes/defaults/main.yml | 1 +

playbooks/roles/gen_nodes/tasks/main.yml | 36 +

playbooks/roles/linux-mirror/tasks/main.yml | 1 +

playbooks/roles/vllm/defaults/main.yml | 17 +

.../vllm/tasks/configure-docker-data.yml | 187 +++++

.../roles/vllm/tasks/deploy-bare-metal.yml | 227 ++++++

playbooks/roles/vllm/tasks/deploy-docker.yml | 105 +++

.../vllm/tasks/deploy-production-stack.yml | 252 +++++++

.../vllm/tasks/install-deps/debian/main.yml | 70 ++

.../roles/vllm/tasks/install-deps/main.yml | 12 +

.../vllm/tasks/install-deps/redhat/main.yml | 108 +++

.../vllm/tasks/install-deps/suse/main.yml | 50 ++

playbooks/roles/vllm/tasks/main.yml | 591 +++++++++++++++

playbooks/roles/vllm/tasks/setup-helm.yml | 33 +

.../roles/vllm/tasks/setup-kubernetes.yml | 236 ++++++

.../roles/vllm/templates/vllm-benchmark.py.j2 | 152 ++++

.../vllm/templates/vllm-container.service.j2 | 80 ++

.../vllm/templates/vllm-deployment.yaml.j2 | 94 +++

.../vllm/templates/vllm-helm-values.yaml.j2 | 63 ++

.../vllm-prod-stack-official-values.yaml.j2 | 154 ++++

.../templates/vllm-upstream-values.yaml.j2 | 151 ++++

.../roles/vllm/templates/vllm-visualize.py.j2 | 434 +++++++++++

playbooks/vllm.yml | 11 +

scripts/vllm-quick-test.sh | 167 +++++

scripts/vllm-status-summary.py | 404 ++++++++++

workflows/Makefile | 4 +

workflows/vllm/Kconfig | 699 ++++++++++++++++++

workflows/vllm/Makefile | 118 +++

workflows/vllm/README.md | 322 ++++++++

40 files changed, 5074 insertions(+), 2 deletions(-)

create mode 100644 defconfigs/vllm

create mode 100644 defconfigs/vllm-production-stack-cpu

create mode 100644 defconfigs/vllm-quick-test

create mode 100644 playbooks/roles/gen_hosts/templates/workflows/vllm.j2

create mode 100644 playbooks/roles/vllm/defaults/main.yml

create mode 100644 playbooks/roles/vllm/tasks/configure-docker-data.yml

create mode 100644 playbooks/roles/vllm/tasks/deploy-bare-metal.yml

create mode 100644 playbooks/roles/vllm/tasks/deploy-docker.yml

create mode 100644 playbooks/roles/vllm/tasks/deploy-production-stack.yml

create mode 100644 playbooks/roles/vllm/tasks/install-deps/debian/main.yml

create mode 100644 playbooks/roles/vllm/tasks/install-deps/main.yml

create mode 100644 playbooks/roles/vllm/tasks/install-deps/redhat/main.yml

create mode 100644 playbooks/roles/vllm/tasks/install-deps/suse/main.yml

create mode 100644 playbooks/roles/vllm/tasks/main.yml

create mode 100644 playbooks/roles/vllm/tasks/setup-helm.yml

create mode 100644 playbooks/roles/vllm/tasks/setup-kubernetes.yml

create mode 100644 playbooks/roles/vllm/templates/vllm-benchmark.py.j2

create mode 100644 playbooks/roles/vllm/templates/vllm-container.service.j2

create mode 100644 playbooks/roles/vllm/templates/vllm-deployment.yaml.j2

create mode 100644 playbooks/roles/vllm/templates/vllm-helm-values.yaml.j2

create mode 100644 playbooks/roles/vllm/templates/vllm-prod-stack-official-values.yaml.j2

create mode 100644 playbooks/roles/vllm/templates/vllm-upstream-values.yaml.j2

create mode 100644 playbooks/roles/vllm/templates/vllm-visualize.py.j2

create mode 100644 playbooks/vllm.yml

create mode 100755 scripts/vllm-quick-test.sh

create mode 100755 scripts/vllm-status-summary.py

create mode 100644 workflows/vllm/Kconfig

create mode 100644 workflows/vllm/Makefile

create mode 100644 workflows/vllm/README.md

diff --git a/.gitignore b/.gitignore

index a1017d66..7d84f047 100644

--- a/.gitignore

+++ b/.gitignore

@@ -91,6 +91,7 @@ playbooks/roles/linux-mirror/linux-mirror-systemd/mirrors.yaml

workflows/selftests/results/

workflows/minio/results/

+workflows/vllm/results/

workflows/linux/refs/default/Kconfig.linus

workflows/linux/refs/default/Kconfig.next

diff --git a/PROMPTS.md b/PROMPTS.md

index 5a788f71..79d5b204 100644

--- a/PROMPTS.md

+++ b/PROMPTS.md

@@ -5,6 +5,37 @@ and example commits and their outcomes, and notes by users of the AI agent

grading. It is also instructive for humans to learn how to use generative

AI to easily extend kdevops for their own needs.

+## Adding new AI/ML workflows

+

+### Adding vLLM Production Stack workflow

+

+**Prompt:**

+I have placed in ../production-stack/ the https://github.com/vllm-project/production-stack.git

+project. Familiarize yourself with it and then add support for as a new

+I workflow, other than Milvus AI on kdevops.

+

+**AI:** Claude Code

+**Commit:** TBD

+**Result:** Tough

+**Grading:** 50%

+

+**Notes:**

+

+Adding just vllm was fairly trivial. However the production stack project

+lacked any clear documentation about what docker container image could be

+used for CPU support, and all docker container images had one or another

+obscure issue.

+

+So while getting the vllm and the production stack generally supported was

+faily trivial, the lack of proper docs make it hard to figure out exactly what

+to do.

+

+Fortunately the implementation correctly identified the need for Kubernetes

+orchestration, included support for various deployment options (Minikube vs

+existing clusters), and integrated monitoring with Prometheus/Grafana. The

+workflow supports A/B testing, multiple routing algorithms, and performance

+benchmarking capabilities.

+

## Extending existing Linux kernel selftests

Below are a set of example prompts / result commits of extending existing

diff --git a/README.md b/README.md

index 9986f1cc..a59bda76 100644

--- a/README.md

+++ b/README.md

@@ -285,10 +285,30 @@ For detailed documentation and demo results, see the

### AI workflow

-kdevops now supports AI/ML system benchmarking, starting with vector databases

-like Milvus. Similar to fstests, you can quickly set up and benchmark AI

+kdevops now supports AI/ML system benchmarking, including vector databases

+and LLM serving infrastructure. Similar to fstests, you can quickly set up and benchmark AI

infrastructure with just a few commands:

+#### vLLM Production Stack

+Deploy and benchmark large language models using the vLLM Production Stack:

+

+```bash

+make defconfig-vllm

+make bringup

+make vllm

+make vllm-benchmark

+```

+

+The vLLM workflow provides:

+- **Production LLM Deployment**: Kubernetes-based vLLM serving with Helm

+- **Request Routing**: Multiple algorithms (round-robin, session affinity, prefix-aware)

+- **Observability**: Integrated Prometheus and Grafana monitoring

+- **Performance Features**: Prefix caching, chunked prefill, KV cache offloading

+- **A/B Testing**: Compare different model configurations

+

+#### Milvus Vector Database

+Benchmark vector database performance for AI applications:

+

```bash

make defconfig-ai-milvus-docker

make bringup

@@ -303,6 +323,7 @@ The AI workflow supports:

- **Demo Results**: View actual benchmark HTML reports and performance visualizations

For details and demo results, see:

+- [kdevops vLLM workflow documentation](workflows/vllm/)

- [kdevops AI workflow documentation](docs/ai/README.md)

- [Milvus performance demo results](docs/ai/vector-databases/milvus.md#demo-results)

@@ -358,6 +379,7 @@ want to just use the kernel that comes with your Linux distribution.

* [kdevops selftests docs](docs/selftests.md)

* [kdevops reboot-limit docs](docs/reboot-limit.md)

* [kdevops AI workflow docs](docs/ai/README.md)

+ * [kdevops vLLM workflow docs](workflows/vllm/)

# kdevops general documentation

diff --git a/defconfigs/vllm b/defconfigs/vllm

new file mode 100644

index 00000000..ba0ccfa7

--- /dev/null

+++ b/defconfigs/vllm

@@ -0,0 +1,40 @@

+# vLLM configuration with Latest Docker deployment

+CONFIG_KDEVOPS_FIRST_RUN=n

+CONFIG_LIBVIRT=y

+CONFIG_LIBVIRT_VCPUS=8

+CONFIG_LIBVIRT_MEM_32G=y

+

+# Workflow configuration

+CONFIG_WORKFLOWS=y

+CONFIG_WORKFLOWS_TESTS=y

+CONFIG_WORKFLOWS_LINUX_TESTS=y

+CONFIG_WORKFLOWS_DEDICATED_WORKFLOW=y

+CONFIG_KDEVOPS_WORKFLOW_DEDICATE_VLLM=y

+

+# vLLM specific configuration

+CONFIG_VLLM_LATEST_DOCKER=y

+CONFIG_VLLM_K8S_MINIKUBE=y

+CONFIG_VLLM_HELM_RELEASE_NAME="vllm"

+CONFIG_VLLM_HELM_NAMESPACE="vllm-system"

+CONFIG_VLLM_MODEL_URL="facebook/opt-125m"

+CONFIG_VLLM_MODEL_NAME="opt-125m"

+CONFIG_VLLM_REPLICA_COUNT=1

+CONFIG_VLLM_USE_CPU_INFERENCE=y

+CONFIG_VLLM_REQUEST_CPU=8

+CONFIG_VLLM_REQUEST_MEMORY="32Gi"

+CONFIG_VLLM_REQUEST_GPU=0

+CONFIG_VLLM_MAX_MODEL_LEN=2048

+CONFIG_VLLM_DTYPE="float32"

+CONFIG_VLLM_TENSOR_PARALLEL_SIZE=1

+CONFIG_VLLM_ROUTER_ENABLED=y

+CONFIG_VLLM_ROUTER_ROUND_ROBIN=y

+CONFIG_VLLM_OBSERVABILITY_ENABLED=y

+CONFIG_VLLM_GRAFANA_PORT=3000

+CONFIG_VLLM_PROMETHEUS_PORT=9090

+CONFIG_VLLM_API_PORT=8000

+CONFIG_VLLM_API_KEY=""

+CONFIG_VLLM_HF_TOKEN=""

+CONFIG_VLLM_BENCHMARK_ENABLED=y

+CONFIG_VLLM_BENCHMARK_DURATION=60

+CONFIG_VLLM_BENCHMARK_CONCURRENT_USERS=10

+CONFIG_VLLM_BENCHMARK_RESULTS_DIR="/data/vllm-benchmark"

diff --git a/defconfigs/vllm-production-stack-cpu b/defconfigs/vllm-production-stack-cpu

new file mode 100644

index 00000000..72f5796a

--- /dev/null

+++ b/defconfigs/vllm-production-stack-cpu

@@ -0,0 +1,45 @@

+# vLLM Production Stack configuration with official Helm chart

+CONFIG_KDEVOPS_FIRST_RUN=n

+CONFIG_LIBVIRT=y

+CONFIG_LIBVIRT_VCPUS=64

+CONFIG_LIBVIRT_MEM_64G=y

+

+# Workflow configuration

+CONFIG_WORKFLOWS=y

+CONFIG_WORKFLOWS_TESTS=y

+CONFIG_WORKFLOWS_LINUX_TESTS=y

+CONFIG_WORKFLOWS_DEDICATED_WORKFLOW=y

+CONFIG_KDEVOPS_WORKFLOW_DEDICATE_VLLM=y

+

+# vLLM Production Stack specific configuration

+CONFIG_VLLM_PRODUCTION_STACK=y

+CONFIG_VLLM_K8S_MINIKUBE=y

+CONFIG_VLLM_VERSION_LATEST=y

+CONFIG_VLLM_HELM_RELEASE_NAME="vllm-prod"

+CONFIG_VLLM_HELM_NAMESPACE="vllm-system"

+CONFIG_VLLM_PROD_STACK_REPO="https://vllm-project.github.io/production-stack"

+CONFIG_VLLM_PROD_STACK_CHART_VERSION="latest"

+CONFIG_VLLM_PROD_STACK_ROUTER_IMAGE="ghcr.io/vllm-project/production-stack/router"

+CONFIG_VLLM_PROD_STACK_ROUTER_TAG="latest"

+CONFIG_VLLM_PROD_STACK_ENABLE_MONITORING=y

+CONFIG_VLLM_PROD_STACK_ENABLE_AUTOSCALING=n

+CONFIG_VLLM_MODEL_URL="facebook/opt-125m"

+CONFIG_VLLM_MODEL_NAME="opt-125m"

+CONFIG_VLLM_REPLICA_COUNT=2

+CONFIG_VLLM_USE_CPU_INFERENCE=y

+CONFIG_VLLM_REQUEST_CPU=8

+CONFIG_VLLM_REQUEST_MEMORY="20Gi"

+CONFIG_VLLM_REQUEST_GPU=0

+CONFIG_VLLM_MAX_MODEL_LEN=2048

+CONFIG_VLLM_DTYPE="float32"

+CONFIG_VLLM_TENSOR_PARALLEL_SIZE=1

+CONFIG_VLLM_ROUTER_ENABLED=y

+CONFIG_VLLM_ROUTER_ROUND_ROBIN=y

+CONFIG_VLLM_OBSERVABILITY_ENABLED=y

+CONFIG_VLLM_GRAFANA_PORT=3000

+CONFIG_VLLM_PROMETHEUS_PORT=9090

+CONFIG_VLLM_API_PORT=8000

+CONFIG_VLLM_BENCHMARK_ENABLED=y

+CONFIG_VLLM_BENCHMARK_DURATION=60

+CONFIG_VLLM_BENCHMARK_CONCURRENT_USERS=10

+CONFIG_VLLM_BENCHMARK_RESULTS_DIR="/data/vllm-benchmark"

diff --git a/defconfigs/vllm-quick-test b/defconfigs/vllm-quick-test

new file mode 100644

index 00000000..39bed05f

--- /dev/null

+++ b/defconfigs/vllm-quick-test

@@ -0,0 +1,42 @@

+# vLLM Production Stack quick test configuration (CI/demo)

+CONFIG_KDEVOPS_FIRST_RUN=n

+CONFIG_LIBVIRT=y

+CONFIG_LIBVIRT_VCPUS=4

+CONFIG_LIBVIRT_MEM_16G=y

+

+# Workflow configuration

+CONFIG_WORKFLOWS=y

+CONFIG_WORKFLOWS_TESTS=y

+CONFIG_WORKFLOWS_LINUX_TESTS=y

+CONFIG_WORKFLOWS_DEDICATED_WORKFLOW=y

+CONFIG_KDEVOPS_WORKFLOW_DEDICATE_VLLM=y

+

+# vLLM specific configuration - Quick test mode

+CONFIG_VLLM_PRODUCTION_STACK=y

+CONFIG_VLLM_K8S_MINIKUBE=y

+CONFIG_VLLM_HELM_RELEASE_NAME="vllm"

+CONFIG_VLLM_HELM_NAMESPACE="vllm-system"

+CONFIG_VLLM_MODEL_URL="facebook/opt-125m"

+CONFIG_VLLM_MODEL_NAME="opt-125m"

+CONFIG_VLLM_REPLICA_COUNT=1

+CONFIG_VLLM_REQUEST_CPU=2

+CONFIG_VLLM_REQUEST_MEMORY="8Gi"

+CONFIG_VLLM_REQUEST_GPU=0

+CONFIG_VLLM_GPU_TYPE=""

+CONFIG_VLLM_MAX_MODEL_LEN=512

+CONFIG_VLLM_DTYPE="auto"

+CONFIG_VLLM_GPU_MEMORY_UTILIZATION="0.9"

+CONFIG_VLLM_TENSOR_PARALLEL_SIZE=1

+CONFIG_VLLM_ROUTER_ENABLED=y

+CONFIG_VLLM_ROUTER_ROUND_ROBIN=y

+CONFIG_VLLM_OBSERVABILITY_ENABLED=y

+CONFIG_VLLM_GRAFANA_PORT=3000

+CONFIG_VLLM_PROMETHEUS_PORT=9090

+CONFIG_VLLM_API_PORT=8000

+CONFIG_VLLM_API_KEY=""

+CONFIG_VLLM_HF_TOKEN=""

+CONFIG_VLLM_QUICK_TEST=y

+CONFIG_VLLM_BENCHMARK_ENABLED=y

+CONFIG_VLLM_BENCHMARK_DURATION=30

+CONFIG_VLLM_BENCHMARK_CONCURRENT_USERS=5

+CONFIG_VLLM_BENCHMARK_RESULTS_DIR="/data/vllm-benchmark"

diff --git a/kconfigs/Kconfig.libvirt b/kconfigs/Kconfig.libvirt

index 95204ad1..4f296309 100644

--- a/kconfigs/Kconfig.libvirt

+++ b/kconfigs/Kconfig.libvirt

@@ -335,6 +335,7 @@ config LIBVIRT_LARGE_CPU

choice

prompt "Guest vCPUs"

+ default LIBVIRT_VCPUS_64 if KDEVOPS_WORKFLOW_DEDICATE_VLLM

default LIBVIRT_VCPUS_8

config LIBVIRT_VCPUS_2

@@ -408,6 +409,7 @@ config LIBVIRT_VCPUS_COUNT

choice

prompt "How much GiB memory to use per guest"

+ default LIBVIRT_MEM_64G if KDEVOPS_WORKFLOW_DEDICATE_VLLM

default LIBVIRT_MEM_4G

config LIBVIRT_MEM_2G

@@ -478,6 +480,7 @@ config LIBVIRT_MEM_MB

config LIBVIRT_IMAGE_SIZE

string "VM image size"

output yaml

+ default "100G" if KDEVOPS_WORKFLOW_DEDICATE_VLLM

default "20G"

depends on GUESTFS

help

diff --git a/kconfigs/workflows/Kconfig b/kconfigs/workflows/Kconfig

index 1be04c9c..5797521f 100644

--- a/kconfigs/workflows/Kconfig

+++ b/kconfigs/workflows/Kconfig

@@ -233,6 +233,14 @@ config KDEVOPS_WORKFLOW_DEDICATE_AI

This will dedicate your configuration to running only the

AI workflow for vector database performance testing.

+config KDEVOPS_WORKFLOW_DEDICATE_VLLM

+ bool "vllm"

+ select KDEVOPS_WORKFLOW_ENABLE_VLLM

+ help

+ This will dedicate your configuration to running only the

+ vLLM Production Stack workflow for deploying and benchmarking

+ large language models with Kubernetes.

+

config KDEVOPS_WORKFLOW_DEDICATE_MINIO

bool "minio"

select KDEVOPS_WORKFLOW_ENABLE_MINIO

@@ -265,6 +273,7 @@ config KDEVOPS_WORKFLOW_NAME

default "mmtests" if KDEVOPS_WORKFLOW_DEDICATE_MMTESTS

default "fio-tests" if KDEVOPS_WORKFLOW_DEDICATE_FIO_TESTS

default "ai" if KDEVOPS_WORKFLOW_DEDICATE_AI

+ default "vllm" if KDEVOPS_WORKFLOW_DEDICATE_VLLM

default "minio" if KDEVOPS_WORKFLOW_DEDICATE_MINIO

default "build-linux" if KDEVOPS_WORKFLOW_DEDICATE_BUILD_LINUX

@@ -395,6 +404,14 @@ config KDEVOPS_WORKFLOW_NOT_DEDICATED_ENABLE_AI

Select this option if you want to provision AI benchmarks on a

single target node for by-hand testing.

+config KDEVOPS_WORKFLOW_NOT_DEDICATED_ENABLE_VLLM

+ bool "vllm"

+ select KDEVOPS_WORKFLOW_ENABLE_VLLM

+ depends on LIBVIRT || TERRAFORM_PRIVATE_NET

+ help

+ Select this option if you want to provision vLLM Production Stack

+ on a single target node for by-hand testing and development.

+

endif # !WORKFLOWS_DEDICATED_WORKFLOW

config KDEVOPS_WORKFLOW_ENABLE_FSTESTS

@@ -530,6 +547,17 @@ source "workflows/ai/Kconfig"

endmenu

endif # KDEVOPS_WORKFLOW_ENABLE_AI

+config KDEVOPS_WORKFLOW_ENABLE_VLLM

+ bool

+ output yaml

+ default y if KDEVOPS_WORKFLOW_NOT_DEDICATED_ENABLE_VLLM || KDEVOPS_WORKFLOW_DEDICATE_VLLM

+

+if KDEVOPS_WORKFLOW_ENABLE_VLLM

+menu "Configure and run vLLM Production Stack"

+source "workflows/vllm/Kconfig"

+endmenu

+endif # KDEVOPS_WORKFLOW_ENABLE_VLLM

+

config KDEVOPS_WORKFLOW_ENABLE_MINIO

bool

output yaml

diff --git a/playbooks/roles/gen_hosts/defaults/main.yml b/playbooks/roles/gen_hosts/defaults/main.yml

index b0b59542..63e7a02c 100644

--- a/playbooks/roles/gen_hosts/defaults/main.yml

+++ b/playbooks/roles/gen_hosts/defaults/main.yml

@@ -30,6 +30,7 @@ kdevops_workflow_enable_sysbench: false

kdevops_workflow_enable_fio_tests: false

kdevops_workflow_enable_mmtests: false

kdevops_workflow_enable_ai: false

+kdevops_workflow_enable_vllm: false

workflows_reboot_limit: false

kdevops_use_declared_hosts: false

diff --git a/playbooks/roles/gen_hosts/tasks/main.yml b/playbooks/roles/gen_hosts/tasks/main.yml

index c4599e4e..546a0038 100644

--- a/playbooks/roles/gen_hosts/tasks/main.yml

+++ b/playbooks/roles/gen_hosts/tasks/main.yml

@@ -270,6 +270,21 @@

- ansible_hosts_template.stat.exists

- not kdevops_use_declared_hosts|default(false)|bool

+- name: Generate the Ansible hosts file for a dedicated vLLM setup

+ tags: ['hosts']

+ ansible.builtin.template:

+ src: "{{ kdevops_hosts_template }}"

+ dest: "{{ ansible_cfg_inventory }}"

+ force: true

+ trim_blocks: True

+ lstrip_blocks: True

+ mode: '0644'

+ when:

+ - kdevops_workflows_dedicated_workflow

+ - kdevops_workflow_enable_vllm|default(false)|bool

+ - ansible_hosts_template.stat.exists

+ - not kdevops_use_declared_hosts|default(false)|bool

+

- name: Verify if final host file exists

ansible.builtin.stat:

path: "{{ ansible_cfg_inventory }}"

diff --git a/playbooks/roles/gen_hosts/templates/workflows/vllm.j2 b/playbooks/roles/gen_hosts/templates/workflows/vllm.j2

new file mode 100644

index 00000000..d0564e80

--- /dev/null

+++ b/playbooks/roles/gen_hosts/templates/workflows/vllm.j2

@@ -0,0 +1,65 @@

+{# Workflow template for vLLM Production Stack #}

+[all]

+localhost ansible_connection=local

+{{ kdevops_host_prefix }}-vllm

+{% if kdevops_baseline_and_dev %}

+{{ kdevops_host_prefix }}-vllm-dev

+{% endif %}

+

+[all:vars]

+ansible_python_interpreter = "{{ kdevops_python_interpreter }}"

+

+[baseline]

+{{ kdevops_host_prefix }}-vllm

+

+[baseline:vars]

+ansible_python_interpreter = "{{ kdevops_python_interpreter }}"

+

+{% if kdevops_baseline_and_dev %}

+[dev]

+{{ kdevops_host_prefix }}-vllm-dev

+

+[dev:vars]

+ansible_python_interpreter = "{{ kdevops_python_interpreter }}"

+

+{% endif %}

+[vllm]

+{{ kdevops_host_prefix }}-vllm

+{% if kdevops_baseline_and_dev %}

+{{ kdevops_host_prefix }}-vllm-dev

+{% endif %}

+

+[vllm:vars]

+ansible_python_interpreter = "{{ kdevops_python_interpreter }}"

+

+{% if kdevops_enable_iscsi %}

+[iscsi]

+{{ kdevops_host_prefix }}-iscsi

+

+[iscsi:vars]

+ansible_python_interpreter = "{{ kdevops_python_interpreter }}"

+{% endif %}

+

+{% if kdevops_nfsd_enable %}

+[nfsd]

+{{ kdevops_host_prefix }}-nfsd

+

+[nfsd:vars]

+ansible_python_interpreter = "{{ kdevops_python_interpreter }}"

+{% endif %}

+

+{% if kdevops_smbd_enable %}

+[smbd]

+{{ kdevops_host_prefix }}-smbd

+

+[smbd:vars]

+ansible_python_interpreter = "{{ kdevops_python_interpreter }}"

+{% endif %}

+

+{% if kdevops_krb5_enable %}

+[kdc]

+{{ kdevops_host_prefix }}-kdc

+

+[kdc:vars]

+ansible_python_interpreter = "{{ kdevops_python_interpreter }}"

+{% endif %}

diff --git a/playbooks/roles/gen_nodes/defaults/main.yml b/playbooks/roles/gen_nodes/defaults/main.yml

index aa8037cd..c6275721 100644

--- a/playbooks/roles/gen_nodes/defaults/main.yml

+++ b/playbooks/roles/gen_nodes/defaults/main.yml

@@ -13,6 +13,7 @@ kdevops_workflow_enable_selftests: false

kdevops_workflow_enable_mmtests: false

kdevops_workflow_enable_fio_tests: false

kdevops_workflow_enable_ai: false

+kdevops_workflow_enable_vllm: false

kdevops_nfsd_enable: false

kdevops_smbd_enable: false

kdevops_krb5_enable: false

diff --git a/playbooks/roles/gen_nodes/tasks/main.yml b/playbooks/roles/gen_nodes/tasks/main.yml

index 716c8ec0..7a98fff4 100644

--- a/playbooks/roles/gen_nodes/tasks/main.yml

+++ b/playbooks/roles/gen_nodes/tasks/main.yml

@@ -790,6 +790,42 @@

- ai_enabled_section_types is defined

- ai_enabled_section_types | length > 0

+# vLLM Production Stack workflow nodes

+

+- name: Generate the vLLM kdevops nodes file using {{ kdevops_nodes_template }} as jinja2 source template

+ tags: ['hosts']

+ vars:

+ node_template: "{{ kdevops_nodes_template | basename }}"

+ nodes: "{{ [kdevops_host_prefix + '-vllm'] }}"

+ all_generic_nodes: "{{ [kdevops_host_prefix + '-vllm'] }}"

+ ansible.builtin.template:

+ src: "{{ node_template }}"

+ dest: "{{ topdir_path }}/{{ kdevops_nodes }}"

+ force: true

+ mode: "0644"

+ when:

+ - kdevops_workflows_dedicated_workflow

+ - kdevops_workflow_enable_vllm

+ - ansible_nodes_template.stat.exists

+ - not kdevops_baseline_and_dev

+

+- name: Generate the vLLM kdevops nodes file with dev hosts using {{ kdevops_nodes_template }} as jinja2 source template

+ tags: ['hosts']

+ vars:

+ node_template: "{{ kdevops_nodes_template | basename }}"

+ nodes: "{{ [kdevops_host_prefix + '-vllm', kdevops_host_prefix + '-vllm-dev'] }}"

+ all_generic_nodes: "{{ [kdevops_host_prefix + '-vllm', kdevops_host_prefix + '-vllm-dev'] }}"

+ ansible.builtin.template:

+ src: "{{ node_template }}"

+ dest: "{{ topdir_path }}/{{ kdevops_nodes }}"

+ force: true

+ mode: "0644"

+ when:

+ - kdevops_workflows_dedicated_workflow

+ - kdevops_workflow_enable_vllm

+ - ansible_nodes_template.stat.exists

+ - kdevops_baseline_and_dev

+

# MinIO S3 Storage Testing workflow nodes

# Multi-filesystem MinIO configurations

diff --git a/playbooks/roles/linux-mirror/tasks/main.yml b/playbooks/roles/linux-mirror/tasks/main.yml

index 007a0411..b028729f 100644

--- a/playbooks/roles/linux-mirror/tasks/main.yml

+++ b/playbooks/roles/linux-mirror/tasks/main.yml

@@ -259,6 +259,7 @@

- not install_only_git_daemon|bool

tags: ["nfs", "mirror"]

+

- name: Check if /mirror is already exported

become: true

ansible.builtin.command:

diff --git a/playbooks/roles/vllm/defaults/main.yml b/playbooks/roles/vllm/defaults/main.yml

new file mode 100644

index 00000000..739c8136

--- /dev/null

+++ b/playbooks/roles/vllm/defaults/main.yml

@@ -0,0 +1,17 @@

+---

+# vLLM role default variables

+vllm_production_stack_repo: https://github.com/vllm-project/production-stack.git

+vllm_production_stack_version: main

+vllm_local_path: /data/vllm

+vllm_results_dir: "{{ vllm_benchmark_results_dir | default('/data/vllm-benchmark') }}"

+

+# Default image versions that are known to work

+# Note: vLLM v0.10.2+ is recommended for Production Stack with CPU inference

+# - v0.6.5+ required for --no-enable-prefix-caching flag support

+# - v0.6.5-v0.6.6 have CPU inference bugs (NotImplementedError in is_async_output_supported)

+# - v0.10.2 fixes all CPU inference issues and is production ready

+# For CPU inference, use openeuler/vllm-cpu instead of vllm/vllm-openai

+vllm_engine_image_repo: "{{ 'openeuler/vllm-cpu' if vllm_use_cpu_inference | default(false) else 'vllm/vllm-openai' }}"

+vllm_engine_image_tag: "{{ 'latest' if vllm_use_cpu_inference | default(false) else 'v0.10.2' }}"

+vllm_prod_stack_router_image: ghcr.io/vllm-project/production-stack/router

+vllm_prod_stack_router_tag: latest

diff --git a/playbooks/roles/vllm/tasks/configure-docker-data.yml b/playbooks/roles/vllm/tasks/configure-docker-data.yml

new file mode 100644

index 00000000..c00b0f48

--- /dev/null

+++ b/playbooks/roles/vllm/tasks/configure-docker-data.yml

@@ -0,0 +1,187 @@

+---

+# Configure Docker to use /data for storage to avoid filling up root filesystem

+

+- name: Ensure /data/docker directory exists

+ ansible.builtin.file:

+ path: /data/docker

+ state: directory

+ mode: '0755'

+ owner: root

+ group: root

+ become: yes

+

+- name: Check if Docker daemon.json exists

+ ansible.builtin.stat:

+ path: /etc/docker/daemon.json

+ register: docker_daemon_config

+

+- name: Read existing Docker daemon configuration

+ ansible.builtin.slurp:

+ src: /etc/docker/daemon.json

+ register: docker_daemon_json

+ when: docker_daemon_config.stat.exists

+

+- name: Parse existing Docker daemon configuration

+ set_fact:

+ docker_config: "{{ docker_daemon_json.content | b64decode | from_json }}"

+ when: docker_daemon_config.stat.exists

+

+- name: Initialize Docker configuration if not exists

+ set_fact:

+ docker_config: {}

+ when: not docker_daemon_config.stat.exists

+

+- name: Check if Docker mirror is available

+ ansible.builtin.stat:

+ path: /mirror/docker

+ register: docker_mirror_check

+

+- name: Auto-detect Docker mirror registry endpoint via 9P mount

+ set_fact:

+ docker_registry_mirrors:

+ - "http://{{ ansible_default_ipv4.gateway }}:5000"

+ docker_insecure_registries:

+ - "{{ ansible_default_ipv4.gateway }}:5000"

+ docker_mirror_type: "9p_mount"

+ when:

+ - docker_mirror_check.stat.exists

+ - docker_mirror_check.stat.isdir

+

+- name: Auto-detect Docker mirror registry endpoint via IP

+ ansible.builtin.uri:

+ url: "http://{{ ansible_default_ipv4.gateway }}:5000/v2/_catalog"

+ method: GET

+ timeout: 5

+ register: mirror_registry_check

+ failed_when: false

+ when:

+ - not docker_mirror_check.stat.exists

+ - docker_registry_mirrors is not defined

+

+- name: Set Docker registry mirror configuration via IP

+ set_fact:

+ docker_registry_mirrors:

+ - "http://{{ ansible_default_ipv4.gateway }}:5000"

+ docker_insecure_registries:

+ - "{{ ansible_default_ipv4.gateway }}:5000"

+ docker_mirror_type: "ip_gateway"

+ when:

+ - not docker_mirror_check.stat.exists

+ - mirror_registry_check.status | default(0) == 200

+

+- name: Display Docker mirror auto-detection result

+ debug:

+ msg: >-

+ Docker mirror auto-detection:

+ {% if docker_registry_mirrors is defined %}

+ ✅ Found Docker mirror at {{ docker_registry_mirrors[0] }}

+ ({{ docker_mirror_type | default('unknown') }}) - will use for faster image pulls

+ {% elif docker_mirror_check.stat.exists %}

+ ⚠️ Docker mirror directory exists but registry not accessible

+ {% else %}

+ ℹ️ No Docker mirror detected - using Docker Hub directly

+ {% endif %}

+

+- name: Update Docker configuration with data-root and optional registry mirrors

+ set_fact:

+ docker_config: >-

+ {{ docker_config | combine({'data-root': '/data/docker'}) |

+ combine({

+ 'registry-mirrors': docker_registry_mirrors,

+ 'insecure-registries': docker_insecure_registries

+ }, recursive=True)

+ if docker_registry_mirrors is defined

+ else docker_config | combine({'data-root': '/data/docker'}) }}

+

+- name: Configure Docker daemon to use /data

+ ansible.builtin.copy:

+ content: "{{ docker_config | to_nice_json }}"

+ dest: /etc/docker/daemon.json

+ mode: '0644'

+ owner: root

+ group: root

+ backup: yes

+ become: yes

+ register: docker_daemon_updated

+

+- name: Stop Docker service

+ ansible.builtin.systemd:

+ name: docker

+ state: stopped

+ become: yes

+ when: docker_daemon_updated.changed

+

+# Handle existing Docker data if present

+- name: Check if old Docker data exists

+ ansible.builtin.stat:

+ path: /var/lib/docker

+ register: old_docker_data

+

+- name: Check if Docker data directory has content

+ ansible.builtin.find:

+ paths: /var/lib/docker

+ file_type: any

+ recurse: no

+ register: docker_content

+ when: old_docker_data.stat.exists and old_docker_data.stat.isdir

+

+- name: Move existing Docker data to /data (if any exists)

+ ansible.builtin.shell:

+ cmd: "cp -a /var/lib/docker/. /data/docker/ && rm -rf /var/lib/docker"

+ become: yes

+ when:

+ - docker_daemon_updated.changed

+ - old_docker_data.stat.exists

+ - old_docker_data.stat.isdir

+ - docker_content.matched | default(0) > 0

+

+- name: Remove empty Docker data directory

+ ansible.builtin.file:

+ path: /var/lib/docker

+ state: absent

+ become: yes

+ when:

+ - docker_daemon_updated.changed

+ - old_docker_data.stat.exists

+ - docker_content.matched | default(0) == 0

+

+- name: Start Docker service

+ ansible.builtin.systemd:

+ name: docker

+ state: started

+ daemon_reload: yes

+ become: yes

+ when: docker_daemon_updated.changed

+

+- name: Ensure Docker service is enabled and running

+ ansible.builtin.systemd:

+ name: docker

+ state: started

+ enabled: yes

+ become: yes

+

+# Configure minikube to use /data as well

+- name: Ensure /data/minikube directory exists with correct ownership

+ ansible.builtin.file:

+ path: /data/minikube

+ state: directory

+ mode: '0755'

+ owner: kdevops

+ group: kdevops

+ recurse: yes

+ become: yes

+

+# Ensure vLLM specific directories use /data

+- name: Create vLLM data directories

+ ansible.builtin.file:

+ path: "{{ item }}"

+ state: directory

+ mode: '0755'

+ owner: kdevops

+ group: kdevops

+ become: yes

+ loop:

+ - /data/vllm

+ - /data/vllm/models

+ - /data/vllm/cache

+ - /data/vllm-benchmark

diff --git a/playbooks/roles/vllm/tasks/deploy-bare-metal.yml b/playbooks/roles/vllm/tasks/deploy-bare-metal.yml

new file mode 100644

index 00000000..0aaea73d

--- /dev/null

+++ b/playbooks/roles/vllm/tasks/deploy-bare-metal.yml

@@ -0,0 +1,227 @@

+---

+# Deploy vLLM on bare metal with systemd

+- name: vLLM bare metal deployment tasks

+ block:

+ - name: Create vLLM directories

+ file:

+ path: "{{ item }}"

+ state: directory

+ mode: '0755'

+ owner: "{{ ansible_user_id }}"

+ group: "{{ ansible_user_gid }}"

+ become: yes

+ loop:

+ - "{{ vllm_bare_metal_data_dir | default('/var/lib/vllm') }}"

+ - "{{ vllm_bare_metal_log_dir | default('/var/log/vllm') }}"

+ - /etc/vllm

+

+ - name: Check GPU availability

+ ansible.builtin.command:

+ cmd: nvidia-smi -L

+ register: gpu_check

+ failed_when: false

+ changed_when: false

+

+ - name: Set GPU facts

+ set_fact:

+ has_nvidia_gpu: "{{ gpu_check.rc == 0 }}"

+ gpu_count: "{{ vllm_bare_metal_declare_host_gpu_count | default(gpu_check.stdout_lines | length if gpu_check.rc == 0 else 0) }}"

+ gpu_type: "{{ vllm_bare_metal_declare_host_gpu_type | default('auto-detected') }}"

+

+ - name: Display GPU information

+ debug:

+ msg: |

+ GPU Configuration:

+ - GPUs Available: {{ 'Yes' if has_nvidia_gpu else 'No' }}

+ - GPU Count: {{ gpu_count }}

+ {% if has_nvidia_gpu %}

+ - GPU Type: {{ gpu_type }}

+ {% endif %}

+ - Inference Mode: {{ 'GPU' if has_nvidia_gpu else 'CPU' }}

+

+ # Container-based deployment

+ - name: Deploy vLLM with container runtime

+ when: vllm_bare_metal_use_container | default(true)

+ block:

+ - name: Determine container runtime

+ set_fact:

+ container_runtime: "{{ 'docker' if vllm_bare_metal_docker | default(true) else 'podman' }}"

+

+ - name: Ensure container runtime is installed

+ package:

+ name: "{{ container_runtime }}"

+ state: present

+ become: yes

+

+ - name: Install nvidia-container-toolkit for GPU support

+ when: has_nvidia_gpu

+ package:

+ name: nvidia-container-toolkit

+ state: present

+ become: yes

+

+ - name: Configure container runtime for GPU

+ when: has_nvidia_gpu and container_runtime == 'docker'

+ ansible.builtin.command:

+ cmd: nvidia-ctk runtime configure --runtime=docker

+ become: yes

+ register: nvidia_config

+ changed_when: nvidia_config.rc == 0

+

+ - name: Restart Docker to apply GPU configuration

+ when: has_nvidia_gpu and container_runtime == 'docker' and nvidia_config.changed

+ systemd:

+ name: docker

+ state: restarted

+ become: yes

+

+ - name: Set vLLM bare metal container image with Docker mirror if enabled

+ ansible.builtin.set_fact:

+ vllm_bare_metal_image_final: >-

+ {%- if use_docker_mirror | default(false) | bool -%}

+ {%- if not has_nvidia_gpu -%}

+ localhost:{{ docker_mirror_port | default(5000) }}/vllm:v0.6.3-cpu

+ {%- else -%}

+ localhost:{{ docker_mirror_port | default(5000) }}/vllm-openai:latest

+ {%- endif -%}

+ {%- else -%}

+ {%- if not has_nvidia_gpu -%}

+ substratusai/vllm:v0.6.3-cpu

+ {%- else -%}

+ vllm/vllm-openai:latest

+ {%- endif -%}

+ {%- endif -%}

+

+ - name: Pull vLLM container image

+ community.docker.docker_image:

+ name: "{{ vllm_bare_metal_image_final }}"

+ source: pull

+

+ - name: Create vLLM systemd service for container

+ template:

+ src: vllm-container.service.j2

+ dest: "/etc/systemd/system/{{ vllm_bare_metal_service_name | default('vllm') }}.service"

+ mode: '0644'

+ become: yes

+ notify: restart vllm

+

+ # Direct installation (pip/source)

+ - name: Deploy vLLM with direct installation

+ when: not (vllm_bare_metal_use_container | default(true))

+ block:

+ - name: Ensure Python 3.8+ is installed

+ package:

+ name:

+ - python3

+ - python3-pip

+ - python3-venv

+ state: present

+ become: yes

+

+ - name: Create vLLM virtual environment

+ command:

+ cmd: python3 -m venv /opt/vllm/venv

+ creates: /opt/vllm/venv

+ become: yes

+

+ - name: Install vLLM from pip

+ pip:

+ name: vllm

+ virtualenv: /opt/vllm/venv

+ state: present

+ when: vllm_bare_metal_install_method | default('pip') == 'pip'

+ become: yes

+

+ - name: Install vLLM from source

+ when: vllm_bare_metal_install_method | default('pip') == 'source'

+ block:

+ - name: Clone vLLM repository

+ git:

+ repo: https://github.com/vllm-project/vllm.git

+ dest: /opt/vllm/src

+ version: main

+ become: yes

+

+ - name: Install vLLM from source

+ pip:

+ name: /opt/vllm/src

+ virtualenv: /opt/vllm/venv

+ editable: true

+ become: yes

+

+ - name: Create vLLM systemd service for direct installation

+ template:

+ src: vllm-direct.service.j2

+ dest: "/etc/systemd/system/{{ vllm_bare_metal_service_name | default('vllm') }}.service"

+ mode: '0644'

+ become: yes

+ notify: restart vllm

+

+ - name: Create vLLM configuration file

+ template:

+ src: vllm.conf.j2

+ dest: /etc/vllm/vllm.conf

+ mode: '0644'

+ become: yes

+ notify: restart vllm

+

+ - name: Reload systemd daemon

+ systemd:

+ daemon_reload: yes

+ become: yes

+

+ - name: Start and enable vLLM service

+ systemd:

+ name: "{{ vllm_bare_metal_service_name | default('vllm') }}"

+ state: started

+ enabled: yes

+ become: yes

+

+ - name: Wait for vLLM to be ready

+ uri:

+ url: "http://localhost:{{ vllm_api_port | default(8000) }}/health"

+ status_code: 200

+ register: health_check

+ until: health_check.status == 200

+ retries: 30

+ delay: 5

+

+ - name: Get vLLM models

+ uri:

+ url: "http://localhost:{{ vllm_api_port | default(8000) }}/v1/models"

+ method: GET

+ register: models_response

+

+ - name: Display deployment information

+ debug:

+ msg: |

+ vLLM deployed successfully on bare metal!

+

+ Service: {{ vllm_bare_metal_service_name | default('vllm') }}

+ Status: Active

+ API Endpoint: http://{{ ansible_default_ipv4.address }}:{{ vllm_api_port | default(8000) }}

+

+ Available Models:

+ {% for model in models_response.json.data %}

+ - {{ model.id }}

+ {% endfor %}

+

+ GPU Configuration:

+ - Mode: {{ 'GPU-accelerated' if has_nvidia_gpu else 'CPU-only' }}

+ {% if has_nvidia_gpu %}

+ - GPUs: {{ gpu_count }}

+ - Type: {{ gpu_type }}

+ {% endif %}

+

+ Service Management:

+ - Start: sudo systemctl start {{ vllm_bare_metal_service_name | default('vllm') }}

+ - Stop: sudo systemctl stop {{ vllm_bare_metal_service_name | default('vllm') }}

+ - Status: sudo systemctl status {{ vllm_bare_metal_service_name | default('vllm') }}

+ - Logs: sudo journalctl -u {{ vllm_bare_metal_service_name | default('vllm') }} -f

+

+# Handler for restarting vLLM

+- name: restart vllm

+ systemd:

+ name: "{{ vllm_bare_metal_service_name | default('vllm') }}"

+ state: restarted

+ become: yes

diff --git a/playbooks/roles/vllm/tasks/deploy-docker.yml b/playbooks/roles/vllm/tasks/deploy-docker.yml

new file mode 100644

index 00000000..be80eb74

--- /dev/null

+++ b/playbooks/roles/vllm/tasks/deploy-docker.yml

@@ -0,0 +1,105 @@

+---

+# Deploy vLLM using latest Docker images

+- name: vLLM Docker deployment tasks

+ block:

+ - name: Ensure Docker service is started and enabled

+ ansible.builtin.systemd:

+ name: docker

+ state: started

+ enabled: yes

+ become: yes

+

+ - name: Add current user to docker group

+ ansible.builtin.user:

+ name: "{{ ansible_user_id }}"

+ groups: docker

+ append: yes

+ become: yes

+

+ - name: Ensure docker socket has correct permissions

+ ansible.builtin.file:

+ path: /var/run/docker.sock

+ mode: '0666'

+ become: yes

+

+ - name: Reset connection to apply docker group membership

+ meta: reset_connection

+

+ - name: Setup Kubernetes environment

+ ansible.builtin.import_tasks: tasks/setup-kubernetes.yml

+ when: vllm_k8s_minikube | default(false) or vllm_k8s_existing | default(false)

+

+ - name: Create vLLM local directory

+ file:

+ path: "{{ vllm_local_path | default('/data/vllm') }}"

+ state: directory

+ mode: '0755'

+

+ - name: Create results directory

+ file:

+ path: "{{ vllm_results_dir | default('/data/vllm-benchmark') }}"

+ state: directory

+ mode: '0755'

+

+ - name: Set vLLM Docker image with mirror if enabled

+ ansible.builtin.set_fact:

+ vllm_docker_image_final: >-

+ {%- if use_docker_mirror | default(false) | bool -%}

+ {%- if vllm_use_cpu_inference | default(false) -%}

+ localhost:{{ docker_mirror_port | default(5000) }}/vllm:v0.6.3-cpu

+ {%- else -%}

+ localhost:{{ docker_mirror_port | default(5000) }}/vllm-openai:latest

+ {%- endif -%}

+ {%- else -%}

+ {%- if vllm_use_cpu_inference | default(false) -%}

+ substratusai/vllm:v0.6.3-cpu

+ {%- else -%}

+ vllm/vllm-openai:latest

+ {%- endif -%}

+ {%- endif -%}

+

+ - name: Generate vLLM deployment manifest

+ template:

+ src: vllm-deployment.yaml.j2

+ dest: "{{ vllm_local_path | default('/data/vllm') }}/vllm-deployment.yaml"

+ mode: '0644'

+

+ - name: Deploy vLLM using kubectl

+ become: no

+ ansible.builtin.command:

+ cmd: kubectl apply -f {{ vllm_local_path | default('/data/vllm') }}/vllm-deployment.yaml

+ register: kubectl_apply

+ changed_when: "'created' in kubectl_apply.stdout or 'configured' in kubectl_apply.stdout"

+

+ - name: Wait for vLLM pods to be ready

+ become: no

+ kubernetes.core.k8s_info:

+ api_version: v1

+ kind: Pod

+ namespace: "{{ vllm_helm_namespace | default('vllm-system') }}"

+ label_selectors:

+ - app=vllm-server

+ register: pod_list

+ until: pod_list.resources | length > 0 and pod_list.resources | selectattr('status.phase', 'equalto', 'Running') | list | length == pod_list.resources | length

+ retries: 30

+ delay: 10

+

+ - name: Get vLLM service endpoint

+ become: no

+ kubernetes.core.k8s_info:

+ api_version: v1

+ kind: Service

+ namespace: "{{ vllm_helm_namespace | default('vllm-system') }}"

+ name: vllm-service

+ register: vllm_service

+

+ - name: Display vLLM endpoint information

+ debug:

+ msg: |

+ vLLM deployed successfully!

+ {% if vllm_k8s_type | default('minikube') == 'minikube' %}

+ To access the API, run: kubectl port-forward -n {{ vllm_helm_namespace | default('vllm-system') }} svc/vllm-service {{ vllm_api_port | default(8000) }}:8000

+ Then access: http://localhost:{{ vllm_api_port | default(8000) }}/v1/models

+ {% else %}

+ API endpoint: {{ vllm_service.resources[0].status.loadBalancer.ingress[0].ip | default('pending') }}:{{ vllm_api_port | default(8000) }}

+ {% endif %}

diff --git a/playbooks/roles/vllm/tasks/deploy-production-stack.yml b/playbooks/roles/vllm/tasks/deploy-production-stack.yml

new file mode 100644

index 00000000..6cf95e0b

--- /dev/null

+++ b/playbooks/roles/vllm/tasks/deploy-production-stack.yml

@@ -0,0 +1,252 @@

+---

+# Deploy vLLM Production Stack using official Helm charts

+- name: vLLM Production Stack deployment tasks

+ block:

+ - name: Setup Kubernetes environment

+ ansible.builtin.import_tasks: tasks/setup-kubernetes.yml

+

+ - name: Ensure Helm is installed

+ ansible.builtin.import_tasks: tasks/setup-helm.yml

+

+ - name: Use default vLLM engine image (Docker mirror acts as pull-through cache)

+ ansible.builtin.set_fact:

+ vllm_engine_image_final: "{{ vllm_engine_image_repo }}"

+

+ - name: Use default router image (Docker mirror acts as pull-through cache)

+ ansible.builtin.set_fact:

+ vllm_router_image_final: "{{ vllm_prod_stack_router_image | default('ghcr.io/vllm-project/production-stack/router') }}"

+

+ - name: Add vLLM Production Stack Helm repository

+ kubernetes.core.helm_repository:

+ name: vllm-prod-stack

+ repo_url: "{{ vllm_prod_stack_repo | default('https://vllm-project.github.io/production-stack') }}"

+ environment:

+ KUBECONFIG: "{{ '/root/.kube/config' if vllm_k8s_minikube | default(false) else '~/.kube/config' }}"

+ MINIKUBE_HOME: "{{ '/data/minikube' if vllm_k8s_minikube | default(false) else omit }}"

+ become: "{{ true if vllm_k8s_minikube | default(false) else false }}"

+

+ - name: Update Helm repositories

+ ansible.builtin.command:

+ cmd: helm repo update

+ environment:

+ KUBECONFIG: "{{ '/root/.kube/config' if vllm_k8s_minikube | default(false) else '~/.kube/config' }}"

+ MINIKUBE_HOME: "{{ '/data/minikube' if vllm_k8s_minikube | default(false) else omit }}"

+ become: "{{ true if vllm_k8s_minikube | default(false) else false }}"

+ changed_when: false

+

+ - name: Verify kubectl context and cluster connectivity

+ ansible.builtin.command:

+ cmd: kubectl cluster-info --request-timeout=30s

+ register: cluster_info

+ retries: 3

+ delay: 10

+ until: cluster_info.rc == 0

+ failed_when: cluster_info.rc != 0

+

+ - name: Set kubectl context for Helm operations

+ ansible.builtin.command:

+ cmd: kubectl config use-context minikube

+ when: vllm_k8s_minikube | default(false)

+ ignore_errors: yes

+

+ - name: Create vLLM local directory

+ ansible.builtin.file:

+ path: "{{ vllm_local_path | default('/data/vllm') }}"

+ state: directory

+ mode: '0755'

+ become: yes

+

+ - name: Create vLLM namespace

+ kubernetes.core.k8s:

+ name: "{{ vllm_helm_namespace | default('vllm-system') }}"

+ api_version: v1

+ kind: Namespace

+ state: present

+ environment:

+ KUBECONFIG: "{{ '/root/.kube/config' if vllm_k8s_minikube | default(false) else '~/.kube/config' }}"

+ MINIKUBE_HOME: "{{ '/data/minikube' if vllm_k8s_minikube | default(false) else omit }}"

+ become: "{{ true if vllm_k8s_minikube | default(false) else false }}"

+

+ - name: Generate Helm values file for Production Stack

+ template:

+ src: vllm-prod-stack-official-values.yaml.j2

+ dest: "{{ vllm_local_path | default('/data/vllm') }}/prod-stack-values.yaml"

+ mode: '0644'

+ when: not (vllm_prod_stack_custom_values | default(false))

+

+ - name: Copy custom Helm values file

+ copy:

+ src: "{{ vllm_prod_stack_values_path }}"

+ dest: "{{ vllm_local_path | default('/data/vllm') }}/prod-stack-values.yaml"

+ mode: '0644'

+ when: vllm_prod_stack_custom_values | default(false)

+

+ - name: Deploy vLLM Production Stack with Helm

+ kubernetes.core.helm:

+ name: "{{ vllm_helm_release_name | default('vllm-prod') }}-{{ inventory_hostname_short }}"

+ namespace: "{{ vllm_helm_namespace | default('vllm-system') }}"

+ chart_ref: vllm-prod-stack/vllm-stack

+ values_files:

+ - "{{ vllm_local_path | default('/data/vllm') }}/prod-stack-values.yaml"

+ wait: true

+ timeout: 30m

+ chart_version: "{{ vllm_prod_stack_chart_version if vllm_prod_stack_chart_version != 'latest' else omit }}"

+ force: true # Force reinstall if needed

+ atomic: false # Don't rollback on failure to help debugging

+ create_namespace: true

+ environment:

+ KUBECONFIG: "{{ '/root/.kube/config' if vllm_k8s_minikube | default(false) else '~/.kube/config' }}"

+ MINIKUBE_HOME: "{{ '/data/minikube' if vllm_k8s_minikube | default(false) else omit }}"

+ become: "{{ true if vllm_k8s_minikube | default(false) else false }}"

+ register: helm_deploy

+ retries: 2

+ delay: 30

+ until: helm_deploy is succeeded

+

+ - name: Wait for vLLM engine pods to be ready

+ kubernetes.core.k8s_info:

+ api_version: v1

+ kind: Pod

+ namespace: "{{ vllm_helm_namespace | default('vllm-system') }}"

+ label_selectors:

+ - model={{ vllm_model_name }}

+ environment:

+ KUBECONFIG: "{{ '/root/.kube/config' if vllm_k8s_minikube | default(false) else '~/.kube/config' }}"

+ MINIKUBE_HOME: "{{ '/data/minikube' if vllm_k8s_minikube | default(false) else omit }}"

+ become: "{{ true if vllm_k8s_minikube | default(false) else false }}"

+ register: engine_pods

+ until: >

+ engine_pods.resources | length > 0 and

+ engine_pods.resources | selectattr('status.phase', 'equalto', 'Running') | list | length == engine_pods.resources | length

+ retries: 30

+ delay: 10

+

+ - name: Wait for router pod to be ready

+ kubernetes.core.k8s_info:

+ api_version: v1

+ kind: Deployment

+ namespace: "{{ vllm_helm_namespace | default('vllm-system') }}"

+ name: "{{ vllm_helm_release_name | default('vllm-prod') }}-{{ inventory_hostname_short }}-deployment-router"

+ environment:

+ KUBECONFIG: "{{ '/root/.kube/config' if vllm_k8s_minikube | default(false) else '~/.kube/config' }}"

+ MINIKUBE_HOME: "{{ '/data/minikube' if vllm_k8s_minikube | default(false) else omit }}"

+ become: "{{ true if vllm_k8s_minikube | default(false) else false }}"

+ register: router_deployment

+ until: >

+ router_deployment.resources | length > 0 and

+ router_deployment.resources[0].status.readyReplicas | default(0) > 0 and

+ router_deployment.resources[0].status.readyReplicas == router_deployment.resources[0].status.replicas

+ retries: 20

+ delay: 5

+ when: vllm_router_enabled | default(true)

+

+ - name: Check if monitoring components exist

+ kubernetes.core.k8s_info:

+ api_version: v1

+ kind: Pod

+ namespace: "{{ vllm_helm_namespace | default('vllm-system') }}"

+ label_selectors:

+ - app.kubernetes.io/name=prometheus

+ environment:

+ KUBECONFIG: "{{ '/root/.kube/config' if vllm_k8s_minikube | default(false) else '~/.kube/config' }}"

+ MINIKUBE_HOME: "{{ '/data/minikube' if vllm_k8s_minikube | default(false) else omit }}"

+ become: "{{ true if vllm_k8s_minikube | default(false) else false }}"

+ register: prometheus_check

+ ignore_errors: yes

+ when: vllm_prod_stack_enable_monitoring | default(true)

+

+ - name: Setup monitoring stack

+ when:

+ - vllm_prod_stack_enable_monitoring | default(true)

+ - prometheus_check is defined

+ - prometheus_check.resources | length > 0

+ block:

+ - name: Wait for Prometheus to be ready

+ kubernetes.core.k8s_info:

+ api_version: v1

+ kind: Pod

+ namespace: "{{ vllm_helm_namespace | default('vllm-system') }}"

+ label_selectors:

+ - app.kubernetes.io/name=prometheus

+ environment:

+ KUBECONFIG: "{{ '/root/.kube/config' if vllm_k8s_minikube | default(false) else '~/.kube/config' }}"

+ MINIKUBE_HOME: "{{ '/data/minikube' if vllm_k8s_minikube | default(false) else omit }}"

+ become: "{{ true if vllm_k8s_minikube | default(false) else false }}"

+ register: prometheus_pod

+ until: prometheus_pod.resources | length > 0 and prometheus_pod.resources[0].status.phase == "Running"

+ retries: 20

+ delay: 5

+

+ - name: Wait for Grafana to be ready

+ kubernetes.core.k8s_info:

+ api_version: v1

+ kind: Pod

+ namespace: "{{ vllm_helm_namespace | default('vllm-system') }}"

+ label_selectors:

+ - app.kubernetes.io/name=grafana

+ environment:

+ KUBECONFIG: "{{ '/root/.kube/config' if vllm_k8s_minikube | default(false) else '~/.kube/config' }}"

+ MINIKUBE_HOME: "{{ '/data/minikube' if vllm_k8s_minikube | default(false) else omit }}"

+ become: "{{ true if vllm_k8s_minikube | default(false) else false }}"

+ register: grafana_pod

+ until: grafana_pod.resources | length > 0 and grafana_pod.resources[0].status.phase == "Running"

+ retries: 20

+ delay: 5

+

+ - name: Get service endpoints

+ kubernetes.core.k8s_info:

+ api_version: v1

+ kind: Service

+ namespace: "{{ vllm_helm_namespace | default('vllm-system') }}"

+ environment:

+ KUBECONFIG: "{{ '/root/.kube/config' if vllm_k8s_minikube | default(false) else '~/.kube/config' }}"

+ MINIKUBE_HOME: "{{ '/data/minikube' if vllm_k8s_minikube | default(false) else omit }}"

+ become: "{{ true if vllm_k8s_minikube | default(false) else false }}"

+ register: services

+

+ - name: Display deployment information

+ debug:

+ msg: |

+ vLLM Production Stack deployed successfully!

+

+ Services available:

+ {% for service in services.resources %}

+ - {{ service.metadata.name }}: {{ service.spec.type }}

+ {% if service.spec.type == 'LoadBalancer' and service.status.loadBalancer.ingress is defined %}

+ External IP: {{ service.status.loadBalancer.ingress[0].ip | default('pending') }}

+ {% endif %}

+ {% endfor %}

+

+ {% if vllm_k8s_minikube | default(false) %}

+ To access services on Minikube:

+ - API: kubectl port-forward -n {{ vllm_helm_namespace }} svc/vllm-router {{ vllm_api_port }}:8000

+ {% if vllm_prod_stack_enable_monitoring | default(true) %}

+ - Grafana: kubectl port-forward -n {{ vllm_helm_namespace }} svc/grafana {{ vllm_grafana_port }}:3000

+ - Prometheus: kubectl port-forward -n {{ vllm_helm_namespace }} svc/prometheus {{ vllm_prometheus_port }}:9090

+ {% endif %}

+ {% endif %}

+

+ - name: Setup autoscaling

+ when: vllm_prod_stack_enable_autoscaling | default(false)

+ kubernetes.core.k8s:

+ state: present

+ definition:

+ apiVersion: autoscaling/v2

+ kind: HorizontalPodAutoscaler

+ metadata:

+ name: vllm-engine-hpa

+ namespace: "{{ vllm_helm_namespace | default('vllm-system') }}"

+ spec:

+ scaleTargetRef:

+ apiVersion: apps/v1

+ kind: Deployment

+ name: vllm-engine

+ minReplicas: "{{ vllm_prod_stack_min_replicas | default(1) }}"

+ maxReplicas: "{{ vllm_prod_stack_max_replicas | default(5) }}"

+ metrics:

+ - type: Resource

+ resource:

+ name: "{{ 'nvidia.com/gpu' if not (vllm_use_cpu_inference | default(false)) else 'cpu' }}"

+ target:

+ type: Utilization

+ averageUtilization: "{{ vllm_prod_stack_target_gpu_utilization | default(80) }}"

diff --git a/playbooks/roles/vllm/tasks/install-deps/debian/main.yml b/playbooks/roles/vllm/tasks/install-deps/debian/main.yml

new file mode 100644

index 00000000..12a8a8e3

--- /dev/null

+++ b/playbooks/roles/vllm/tasks/install-deps/debian/main.yml

@@ -0,0 +1,70 @@

+---

+- name: Update apt cache

+ become: true

+ become_method: sudo

+ ansible.builtin.apt:

+ update_cache: true

+ tags: vllm

+

+- name: Install vLLM system dependencies

+ become: true

+ become_method: sudo

+ ansible.builtin.apt:

+ name:

+ - git

+ - curl

+ - wget

+ - python3

+ - python3-venv

+ - docker.io

+ - ca-certificates

+ - gnupg

+ - lsb-release

+ - apt-transport-https

+ - iptables

+ - conntrack

+ state: present

+ update_cache: true

+ tags: ["vllm", "deps"]

+

+- name: Install Python development dependencies

+ become: true

+ become_method: sudo

+ ansible.builtin.apt:

+ name:

+ - python3-dev

+ - python3-setuptools

+ - python3-wheel

+ - build-essential

+ state: present

+ tags: ["vllm", "deps"]

+

+- name: Install Python benchmarking dependencies

+ become: true

+ become_method: sudo

+ ansible.builtin.apt:

+ name:

+ - python3-aiohttp

+ - python3-numpy

+ - python3-pandas

+ - python3-matplotlib

+ state: present

+ tags: ["vllm", "deps", "benchmark"]

+

+- name: Install Python Kubernetes client library

+ become: true

+ become_method: sudo

+ ansible.builtin.apt:

+ name:

+ - python3-kubernetes

+ state: present

+ tags: ["vllm", "deps"]

+

+- name: Add kdevops user to docker group

+ become: true

+ become_method: sudo

+ ansible.builtin.user:

+ name: kdevops

+ groups: docker

+ append: yes

+ tags: ["vllm", "deps", "docker-config"]

diff --git a/playbooks/roles/vllm/tasks/install-deps/main.yml b/playbooks/roles/vllm/tasks/install-deps/main.yml

new file mode 100644

index 00000000..6b637133

--- /dev/null

+++ b/playbooks/roles/vllm/tasks/install-deps/main.yml

@@ -0,0 +1,12 @@

+---

+- ansible.builtin.include_role:

+ name: pkg

+

+# tasks to install dependencies for vLLM

+- name: vLLM distribution specific setup

+ ansible.builtin.import_tasks: tasks/install-deps/debian/main.yml

+ when: ansible_facts['os_family']|lower == 'debian'

+- ansible.builtin.import_tasks: tasks/install-deps/suse/main.yml

+ when: ansible_facts['os_family']|lower == 'suse'

+- ansible.builtin.import_tasks: tasks/install-deps/redhat/main.yml

+ when: ansible_facts['os_family']|lower == 'redhat'

diff --git a/playbooks/roles/vllm/tasks/install-deps/redhat/main.yml b/playbooks/roles/vllm/tasks/install-deps/redhat/main.yml

new file mode 100644

index 00000000..12efb9e1

--- /dev/null

+++ b/playbooks/roles/vllm/tasks/install-deps/redhat/main.yml

@@ -0,0 +1,108 @@

+---

+- name: Install vLLM system dependencies

+ become: true

+ become_method: sudo

+ ansible.builtin.yum:

+ name:

+ - git

+ - curl

+ - wget

+ - python3

+ - docker

+ - ca-certificates

+ - gnupg

+ state: present

+ when: ansible_distribution_major_version|int <= 7

+ tags: ["vllm", "deps"]

+

+- name: Install vLLM system dependencies (dnf)

+ become: true

+ become_method: sudo

+ ansible.builtin.dnf:

+ name:

+ - git

+ - curl

+ - wget

+ - python3

+ - docker

+ - ca-certificates

+ - gnupg

+ state: present

+ when: ansible_distribution_major_version|int >= 8

+ tags: ["vllm", "deps"]

+

+- name: Install Python development dependencies

+ become: true

+ become_method: sudo

+ ansible.builtin.yum:

+ name:

+ - python3-devel

+ - python3-setuptools

+ - python3-wheel

+ - gcc

+ - gcc-c++

+ - make

+ state: present

+ when: ansible_distribution_major_version|int <= 7

+ tags: ["vllm", "deps"]

+

+- name: Install Python development dependencies (dnf)

+ become: true

+ become_method: sudo

+ ansible.builtin.dnf:

+ name:

+ - python3-devel

+ - python3-setuptools

+ - python3-wheel

+ - gcc

+ - gcc-c++

+ - make

+ state: present

+ when: ansible_distribution_major_version|int >= 8

+ tags: ["vllm", "deps"]

+

+- name: Install Python benchmarking dependencies (yum)

+ become: true

+ become_method: sudo

+ ansible.builtin.yum:

+ name:

+ - python3-aiohttp

+ - python3-numpy

+ - python3-pandas

+ - python3-matplotlib

+ state: present

+ when: ansible_distribution_major_version|int <= 7

+ tags: ["vllm", "deps", "benchmark"]

+

+- name: Install Python benchmarking dependencies (dnf)

+ become: true

+ become_method: sudo

+ ansible.builtin.dnf:

+ name:

+ - python3-aiohttp

+ - python3-numpy

+ - python3-pandas

+ - python3-matplotlib

+ state: present

+ when: ansible_distribution_major_version|int >= 8

+ tags: ["vllm", "deps", "benchmark"]

+

+- name: Install Python Kubernetes client library (yum)

+ become: true

+ become_method: sudo

+ ansible.builtin.yum:

+ name:

+ - python3-kubernetes

+ state: present

+ when: ansible_distribution_major_version|int <= 7

+ tags: ["vllm", "deps"]

+

+- name: Install Python Kubernetes client library (dnf)

+ become: true

+ become_method: sudo

+ ansible.builtin.dnf:

+ name:

+ - python3-kubernetes

+ state: present

+ when: ansible_distribution_major_version|int >= 8

+ tags: ["vllm", "deps"]

diff --git a/playbooks/roles/vllm/tasks/install-deps/suse/main.yml b/playbooks/roles/vllm/tasks/install-deps/suse/main.yml

new file mode 100644

index 00000000..fcb17d94

--- /dev/null

+++ b/playbooks/roles/vllm/tasks/install-deps/suse/main.yml

@@ -0,0 +1,50 @@

+---

+- name: Install vLLM system dependencies

+ become: true

+ become_method: sudo

+ ansible.builtin.zypper:

+ name:

+ - git

+ - curl

+ - wget

+ - python3

+ - docker

+ - ca-certificates

+ - gnupg

+ state: present

+ tags: ["vllm", "deps"]

+

+- name: Install Python development dependencies

+ become: true

+ become_method: sudo

+ ansible.builtin.zypper:

+ name:

+ - python3-devel

+ - python3-setuptools

+ - python3-wheel

+ - gcc

+ - gcc-c++

+ - make

+ state: present

+ tags: ["vllm", "deps"]

+

+- name: Install Python benchmarking dependencies

+ become: true

+ become_method: sudo

+ ansible.builtin.zypper:

+ name:

+ - python3-aiohttp

+ - python3-numpy

+ - python3-pandas

+ - python3-matplotlib

+ state: present

+ tags: ["vllm", "deps", "benchmark"]

+

+- name: Install Python Kubernetes client library

+ become: true

+ become_method: sudo

+ ansible.builtin.zypper:

+ name:

+ - python3-kubernetes

+ state: present

+ tags: ["vllm", "deps"]

diff --git a/playbooks/roles/vllm/tasks/main.yml b/playbooks/roles/vllm/tasks/main.yml

new file mode 100644

index 00000000..d6b239f4

--- /dev/null

+++ b/playbooks/roles/vllm/tasks/main.yml

@@ -0,0 +1,591 @@

+---

+# First ensure we have the data partition for vLLM storage

+- ansible.builtin.include_role:

+ name: create_data_partition

+ tags: ["data_partition", "vllm-storage"]

+

+# Set up Docker mirror 9P mount if available and configured

+- ansible.builtin.import_role:

+ name: docker_mirror_9p

+ tags: ["deps", "docker-config"]

+

+- name: Set vLLM workflow variables

+ set_fact:

+ vllm_workflow_enabled: true

+ tags: vars

+

+- name: Install vLLM dependencies

+ ansible.builtin.import_tasks: tasks/install-deps/main.yml

+ tags: ["vllm", "deps"]

+

+# Configure Docker and storage to use /data partition BEFORE starting any containers

+- name: Configure Docker to use /data for storage

+ ansible.builtin.import_tasks: tasks/configure-docker-data.yml

+ tags: ["deps", "docker-config", "storage", "vllm-deploy"]

+

+# Route to appropriate deployment method based on configuration

+- name: Deploy vLLM using latest Docker images

+ ansible.builtin.import_tasks: tasks/deploy-docker.yml

+ when: vllm_deployment_type | default('docker') == 'docker'

+ tags: ["vllm-deploy"]

+

+- name: Deploy vLLM Production Stack with Helm

+ ansible.builtin.import_tasks: tasks/deploy-production-stack.yml

+ when: vllm_deployment_type | default('docker') == 'production-stack'

+ tags: ["vllm-deploy"]

+

+- name: Deploy vLLM on bare metal

+ ansible.builtin.import_tasks: tasks/deploy-bare-metal.yml

+ when: vllm_deployment_type | default('docker') == 'bare-metal'

+ tags: ["vllm-deploy"]

+

+# Legacy deployment block - will be moved to deploy-docker.yml

+- name: vLLM deployment tasks (legacy)

+ tags: vllm-deploy

+ when: vllm_deployment_type | default('docker') != 'production-stack'

+ block:

+ - name: Ensure Docker service is started and enabled

+ ansible.builtin.systemd:

+ name: docker

+ state: started

+ enabled: yes

+ become: yes

+

+ - name: Add current user to docker group

+ ansible.builtin.user:

+ name: "{{ ansible_user_id }}"

+ groups: docker

+ append: yes

+ become: yes

+

+ - name: Ensure docker socket has correct permissions

+ ansible.builtin.file:

+ path: /var/run/docker.sock

+ mode: '0666'

+ become: yes

+

+ - name: Reset connection to apply docker group membership

+ meta: reset_connection

+

+ - name: Wait for Docker to be accessible

+ ansible.builtin.wait_for:

+ path: /var/run/docker.sock

+ state: present

+ timeout: 30

+

+ - name: Test Docker access

+ ansible.builtin.command:

+ cmd: docker version

+ register: docker_test

+ become: no

+ failed_when: false

+ changed_when: false

+ retries: 3

+ delay: 2

+ until: docker_test.rc == 0

+

+ - name: Check if kubectl exists

+ ansible.builtin.stat:

+ path: /usr/local/bin/kubectl

+ register: kubectl_stat

+

+ - name: Get latest kubectl version

+ when: not kubectl_stat.stat.exists

+ ansible.builtin.uri:

+ url: https://dl.k8s.io/release/stable.txt

+ return_content: yes

+ register: kubectl_version

+

+ - name: Download kubectl

+ when: not kubectl_stat.stat.exists

+ ansible.builtin.get_url:

+ url: "https://dl.k8s.io/release/{{ kubectl_version.content | trim }}/bin/linux/amd64/kubectl"

+ dest: /tmp/kubectl

+ mode: '0755'

+

+ - name: Install kubectl

+ when: not kubectl_stat.stat.exists

+ ansible.builtin.copy:

+ src: /tmp/kubectl

+ dest: /usr/local/bin/kubectl

+ mode: '0755'

+ remote_src: yes

+

+ - name: Check if helm exists

+ ansible.builtin.stat:

+ path: /usr/local/bin/helm

+ register: helm_stat

+

+ - name: Download Helm installer script

+ when: not helm_stat.stat.exists

+ ansible.builtin.get_url:

+ url: https://raw.githubusercontent.com/helm/helm/main/scripts/get-helm-3

+ dest: /tmp/get-helm-3.sh

+ mode: '0755'

+

+ - name: Install Helm

+ when: not helm_stat.stat.exists

+ ansible.builtin.command:

+ cmd: /tmp/get-helm-3.sh

+ environment:

+ HELM_INSTALL_DIR: /usr/local/bin

+

+ - name: Check if minikube exists

+ when: vllm_k8s_type | default('minikube') == 'minikube'

+ ansible.builtin.stat:

+ path: /usr/local/bin/minikube

+ register: minikube_stat

+

+ - name: Download Minikube

+ when:

+ - vllm_k8s_type | default('minikube') == 'minikube'

+ - not minikube_stat.stat.exists

+ ansible.builtin.get_url:

+ url: https://storage.googleapis.com/minikube/releases/latest/minikube-linux-amd64

+ dest: /tmp/minikube-linux-amd64

+ mode: '0755'

+

+ - name: Install Minikube

+ when:

+ - vllm_k8s_type | default('minikube') == 'minikube'

+ - not minikube_stat.stat.exists

+ ansible.builtin.copy:

+ src: /tmp/minikube-linux-amd64

+ dest: /usr/local/bin/minikube

+ mode: '0755'

+ remote_src: yes

+

+ - name: Get available system memory

+ ansible.builtin.command:

+ cmd: free -m

+ register: memory_info

+ changed_when: false

+

+ - name: Calculate minikube memory allocation

+ set_fact:

+ minikube_memory_mb: >-

+ {%- set total_mem = memory_info.stdout_lines[1].split()[1] | int -%}

+ {%- set requested_mem = (vllm_request_memory | default('16Gi') | regex_replace('Gi', '') | int) * 1024 -%}

+ {%- set available_mem = (total_mem * 0.8) | int -%}

+ {{ [requested_mem, available_mem, 3072] | min }}

+

+ - name: Calculate minikube CPU allocation

+ set_fact:

+ minikube_cpus: >-

+ {%- set requested_cpus = vllm_request_cpu | default(4) | int -%}

+ {%- set available_cpus = ansible_processor_vcpus | default(4) | int -%}

+ {{ [requested_cpus, available_cpus] | min }}

+

+ - name: Check if Minikube is already running

+ when: vllm_k8s_type | default('minikube') == 'minikube'

+ become: no

+ ansible.builtin.command:

+ cmd: minikube status --format={{ "{{.Host}}" }}

+ register: minikube_status

+ changed_when: false

+ failed_when: false

+

+ - name: Ensure Minikube directory permissions

+ when: vllm_k8s_type | default('minikube') == 'minikube'

+ ansible.builtin.file:

+ path: /data/minikube

+ state: directory

+ owner: "kdevops"

+ group: "kdevops"

+ mode: '0755'

+ recurse: yes

+ become: yes

+

+ - name: Display minikube start parameters

+ when:

+ - vllm_k8s_type | default('minikube') == 'minikube'

+ - minikube_status.stdout != 'Running'

+ debug:

+ msg: "Starting minikube with {{ minikube_cpus }} CPUs, {{ minikube_memory_mb }}MB RAM, 50GB disk. This may take 5-10 minutes on first run..."

+

+ - name: Start Minikube cluster

+ when:

+ - vllm_k8s_type | default('minikube') == 'minikube'

+ - minikube_status.stdout != 'Running'

+ become: no

+ ansible.builtin.command:

+ cmd: minikube start --driver=docker --cpus={{ minikube_cpus }} --memory={{ minikube_memory_mb }} --disk-size=50g --insecure-registry="{{ ansible_default_ipv4.gateway }}:5000"

+ environment:

+ MINIKUBE_HOME: /data/minikube

+ register: minikube_start

+ changed_when: "'Done!' in minikube_start.stdout"

+ async: 600 # Allow up to 10 minutes

+ poll: 30 # Check every 30 seconds

+

+ - name: Enable GPU support in Minikube (if available)

+ when:

+ - vllm_k8s_type | default('minikube') == 'minikube'

+ - not (vllm_use_cpu_inference | default(false))

+ - vllm_request_gpu | default(1) | int > 0

+ become: no

+ ansible.builtin.command:

+ cmd: minikube addons enable nvidia-gpu-device-plugin

+ ignore_errors: yes

+

+ - name: Disable GPU support in Minikube for CPU inference